It all started one day when we tried to count the votes.

So you know how ForceRank.it works right? Your group ranks all the choices, then we add up how many points each choice gets and boom, we show which choice is the most popular.

Right?

Well, it turns out that this is one of those cases where "the obvious way" can produce very unintuitive (and hence arguably "wrong") results.

How is that possible?

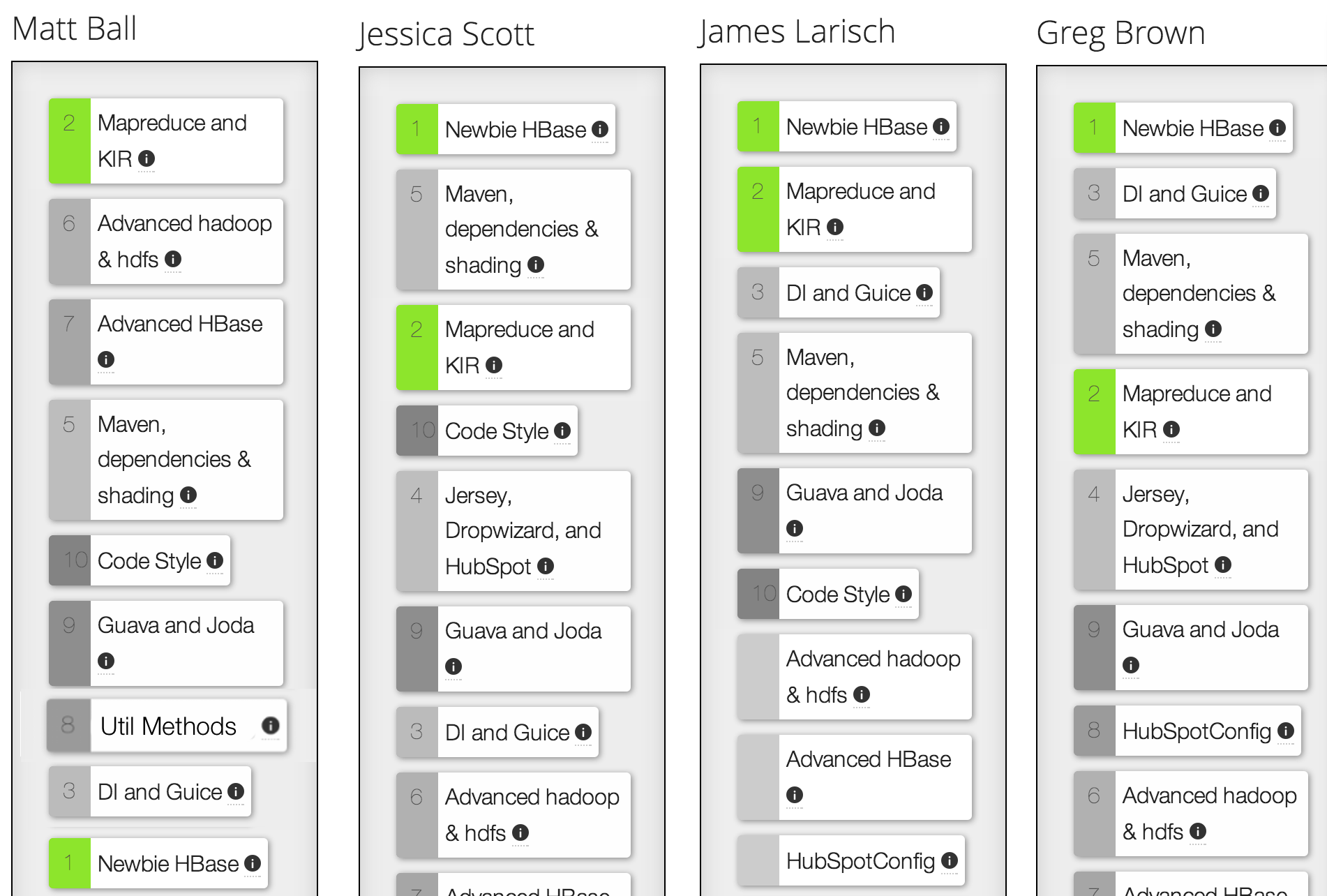

Let's see an example. This is a poll that one of our users created to figure out what topic should be the subject of his tech talk. Give it a quick look and you'll see that three out of four people had the same first choice. So picking a winner should be easy right?

But that's not what happened.

The first version of our algorithm picked "Mapreduce and KIR" as the winner. How is that possible you ask? Well, let's do the math together and add up how many points each option should get. I'll highlight just those options below.

So with 9 options each, "Mapreduce and KIR" gets: 9 points from Matt, 7 from Jessica, 8 from James and 6 from Greg, totalling 30.

And Newbie HBase gets: 1 from Matt, 9 from Jessica, 9 from James and 9 from Greg, totalling 28.

Hrrmph

We dubbed this the "Matt Ball" effect, but the more canonical description is that our algorithm has failed the "Majority Criterion", which states: "if one candidate is preferred by a majority (more than 50%) of voters, then that candidate must win".

So what did we do?

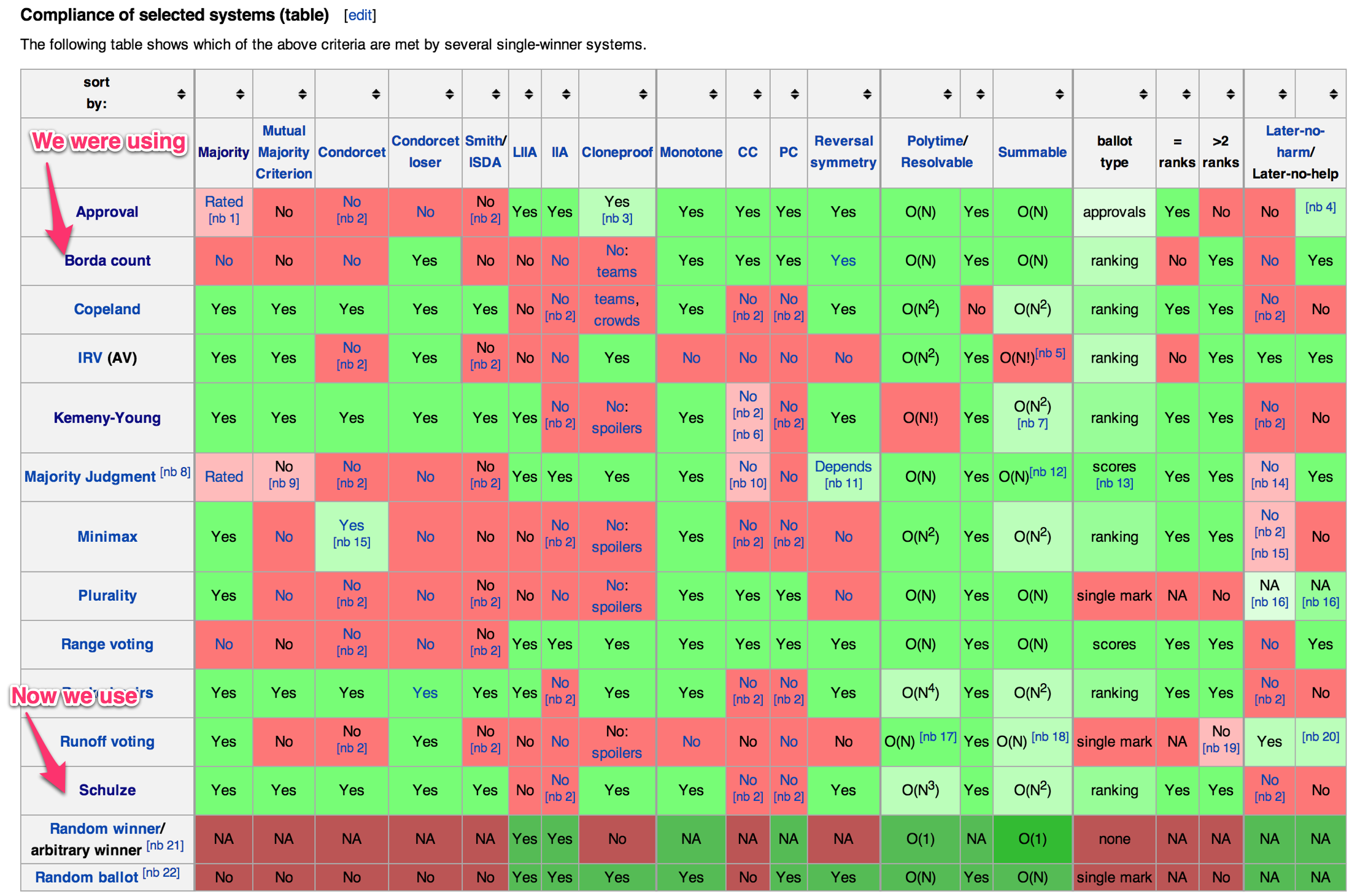

Well, we went to the wikipedia and dug into Voting Systems. Unsurprisingly it turns out that there's been a lot of high quality thinking on this subject. We looked into a number of methods and the one that seems like it is the best fit for ForceRank was Schulze Method. In a nutshell, Schulze breaks down the voting into a ton of mini ranking between each combination of options, what they call a "pairwise-analysis". Next it does a neat bit of graph magic to pull out a series of winners.

The result, is that it is guaranteed to ace the "Majority Criterion" (which our previous method failed) and a number of other conditions as well.

The only real downside is that Schulze method is a bit more difficult to explain, but at the end of the day it delivers an answer that feels much more intuitively like the "fair" winner of a vote.

Next Up?

Next on our list is building in ways to see the patterns in your group's rankings. There's a lot of really interesting information to be gleaned from the data that ForceRank provides and it's our goal to help you get a quick and easy to comprehend understanding of the complex nature of your groups preferences, and the outliers within.